One of the big questions often asked of R&E networking is why? Why, when commercial internet networks are so large, is there still a need for dedicated research networks? Surely organisations can simply buy commercial off-the-shelf network connectivity,with commercial service providers now offering 10 and even 100Gbit/s network connections.

In February 2017 members of GÉANT and AARNet had been working on tuning the performance of flows between Europe and Australia using a 10 Gigabit path; Niall Donaghy, Richard Hughes-Jones and Mian Usman of the GÉANT Network team then decided to compare the various alternative offerings to see how R&E networking measures up to commercial alternatives.

Mian Usman, Network Architect, GÉANT explains what was done:

The test

The primary purpose of the test was to assess the ability of R&E networks and two separate commercial providers to support sustained high capacity intercontinental file transfers.

The two locations selected were London and Sydney Australia to offer the biggest test for both R&E and commercial service providers. At each location a high performance Data Transfer Node (DTN) with full 10Gbit/s line speed access was chosen to simulate a large file transfer scenario.

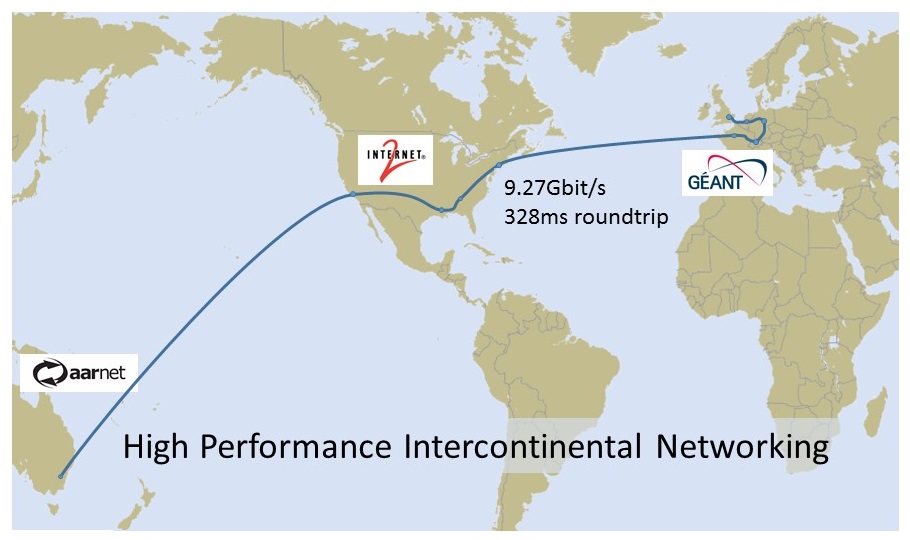

For the R&E test the networks used were GÉANT (Europe), Internet 2 (North America) and AARnet (Australia). To make the GÉANT test scenario more representative of a real-world condition, the traffic from the London DTN was routed via Amsterdam, Frankfurt, Geneva and Paris before transiting across Internet2 to its destination in AARnet.

To test two different commercial ISPs, GÉANT used existing transit services and forced the traffic to be routed via the commercial provider under test. This simulated the scenario of a large institution taking a private ISP connection.

To measure the achievable throughput, different TCP buffer sizes between 64Kbytes and 400Mbytes were tested to examine the ultimate performance of the different connections.

A key factor in this testing is that it was conducted ad hoc. For none of the tests were the Network Operation Centres of GÉANT, Internet2, AARnet or the commercial providers informed in advance This was done to more accurately replicate the real-world scenario of a research team undertaking ad-hoc communications.

The results

The results of the tests were startling. Using the GÉANT network the DTNs experienced a sustained transfer rate of 9.27Gbit/s (using the 400Mbyte TCP buffer size)

For the two commercial providers the first reached a maximum of 0.9Gbit/s using a 200Mbyte TCP buffer. Using a 300Mbyte TCP buffer a speed of 1.72Gbit/s was achieved but between 35 and 40 seconds the traffic dropped to zero. It is possible that traffic was actively dropped by the ISP due to their considering it a DoS attack. Nonetheless, it is important to note that in the 35 seconds before the blocking, the transfer rate had ramped up to its maximum potential for that commercial path. Even without this apparent pro-active DoS mitigation, the data transfer rate would not have increased. Certainly it’s inconceivable that researchers should have to announce their data transfers to commercial NOCs before starting them.

For the second ISP a peak of 110Mbit/s was achieved but this time the connection was terminated after 15 seconds (again likely as a result of a false positive proactive DoS mitigation).

In addition, the R&E network tests exhibited zero retransmits compared to between 2 and 4% on commercial ISPs The lack of retransmits increases the performance of the transfer and applications and is a result of careful network capacity planning

The difference between bandwidth and capacityThe GÉANT tests were performed across the 100Gbit/s transatlantic connections. At the time GÉANT also operated 3*10Gbit/s transatlantic connections between Frankfurt and Washington (now discontinued). It was decided to retry the GÉANT performance test but to reroute the transatlantic connection via these 10Gbit/s links. The result of this test was a drop in sustained speed from the 9.2Gbit/s over the 100Gbit/s connection down to 6.2Gbit/s. This is still an amazing performance compared to the commercial providers. This drop of nearly 33% in performance is due to the nature of link load-balancing. Every IP traffic flow, or ‘conversation’, between a pair of DTNs (or any nodes) must traverse the same physical link to ensure that packets arrive in order. If a conversation were distributed across all three links, the nodes would have to re-order the out-of-order packets. Whilst the data would traverse the network more quickly, the re-ordering overhead would decimate performance. So given that our test flows were effectively running on a single 10Gbit link, we note that the reduced performance was likely due to contention with other flows on that link.This experiment dramatically demonstrates the performance advantage of using the highest possible backbone bandwidths, and highlights the constraints that are imposed when you have very large flows attempting to traverse even a large number of small bandwidth links. 10*10 Gbit might perform similarly to 100 Gbit for a large number of small flows – as typically seen on commercial networks – but will perform much more poorly with large flows. |

Conclusions

The results of these tests show that, for the high performance needs of research and education then the advantages of dedicated R&E networking are clear. Commercial networks operate to maximise the profit potentials and their backbones are scaled to meet the needs of the “average” or “typical” user, where adding capacity in smaller increments (eg: multiple 10 Gbit links aggregated together) makes financial sense and comes with no disadvantages for the typical user.

The apparent use of pro-active DoS mitigation in the commercial networks, which blocked our high capacity DTN traffic, indicates that high performance “super users” are likely to experience problems with unplanned or unapproved activity. In contrast the R&E networks coped well with the unannounced testing showing an ability to cater for the needs and demands of the community.

With the growing use of R&E networks for applications such as cloud computing and HPC activities the need to be able to support large, unplanned bursty data flows will continue to grow. Meeting this demand ensures NRENs continue to have major advantages over commercial alternatives.

Add Comment